The Deepfake AI Crisis Your PR Plan Doesn’t Cover

You've got a crisis communications plan. You've got brand guidelines. You've got a social media policy that took three rounds of edits to finalize.

But here's a question worth sitting with for a moment: what happens when a video of your CEO surfaces announcing a layoff that never happened? Or when your CFO gets a phone call that sounds exactly like your Managing Director asking for an urgent wire transfer?

Most marketing managers don't have an answer to that. Not yet.

The threat that's already here

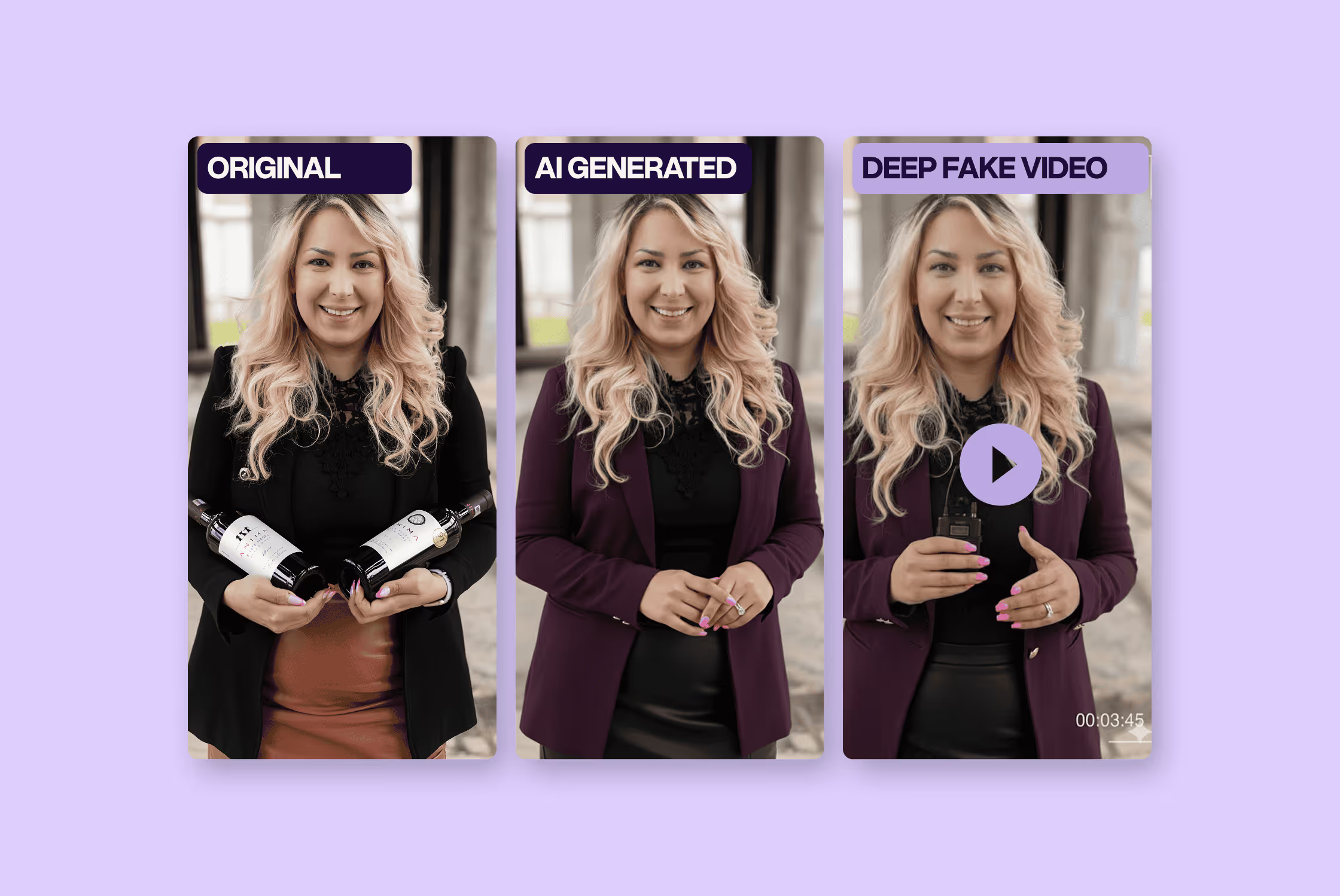

When people talk about deepfakes, they tend to picture something cinematic: a manipulated political speech, a celebrity scandal, a viral hoax. Something that happens to someone else. Someone famous. Someone with a PR team on speed dial.

But the threat that's actually heading toward mid-size businesses looks a lot less dramatic and a lot more targeted.

Voice cloning is the one keeping fraud prevention teams up at night. It takes just a few minutes of publicly available audio to replicate someone's tone, cadence, and speech patterns with unnerving accuracy. A conference talk on YouTube. A podcast appearance. A LinkedIn Live. Most senior leaders at growing companies have already put enough of themselves online that the raw material is sitting there, ready to be used. The resulting fake call doesn't need to fool a security expert. It just needs to fool one person on your finance team, under time pressure, on a Thursday afternoon.

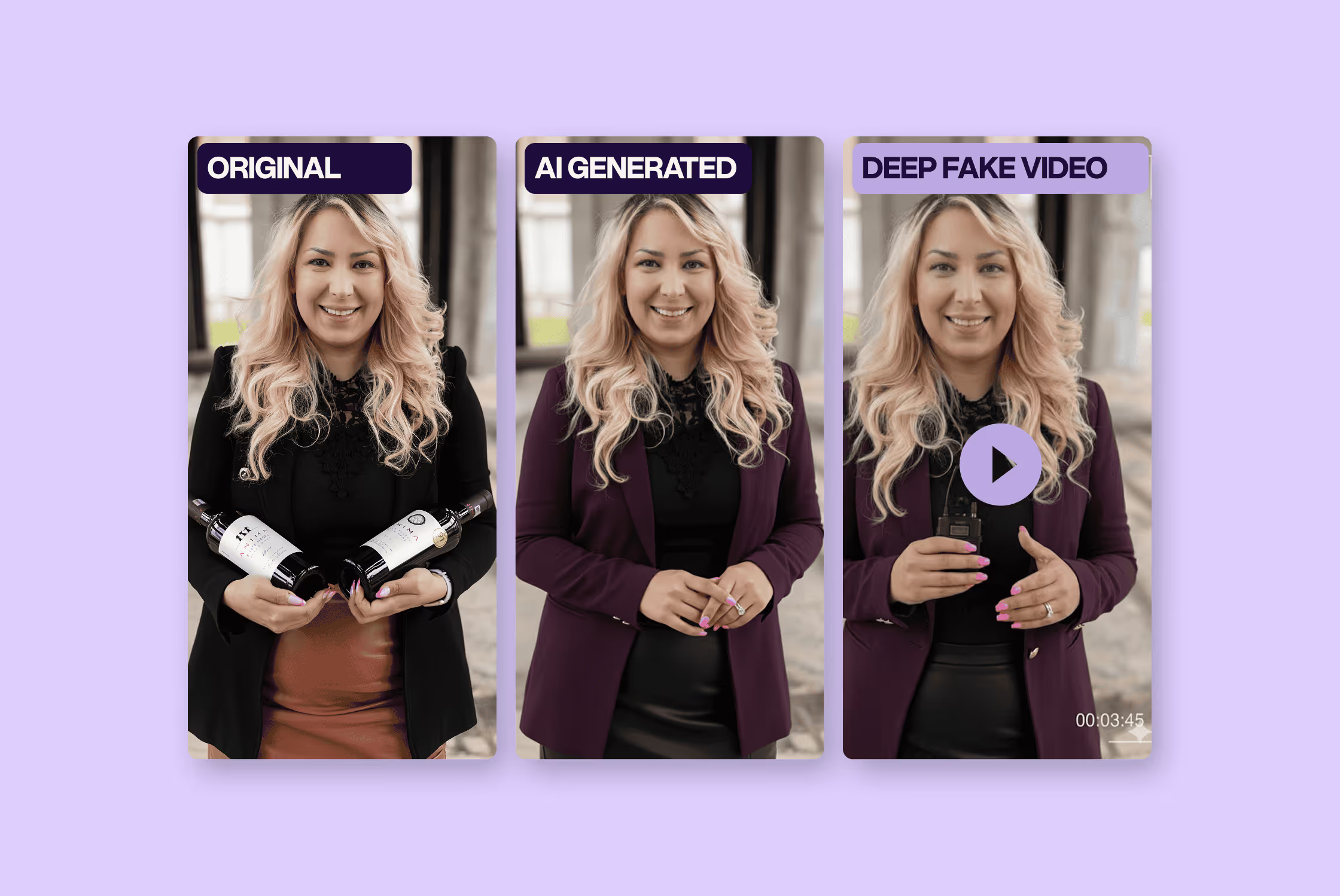

The reputational version operates differently but the damage mechanism is the same: speed. A fabricated video of your leadership announcing a scandal, a bankruptcy, or a discriminatory statement doesn't need to be technically perfect. It needs to be believable for long enough to spread. A few hundred shares before anyone official responds is enough to embed the story in people's memories. You can issue a correction. You can prove it's fake. But you cannot un-ring that bell.

There's a reason this matters specifically to marketing and communications teams: you are the function responsible for what the brand says, where it says it, and how fast it responds when something goes wrong. IT can patch a vulnerability. Legal can draft a letter. But the moment a fake video of your CEO lands on social media, the clock is ticking on your desk.

The trust problem

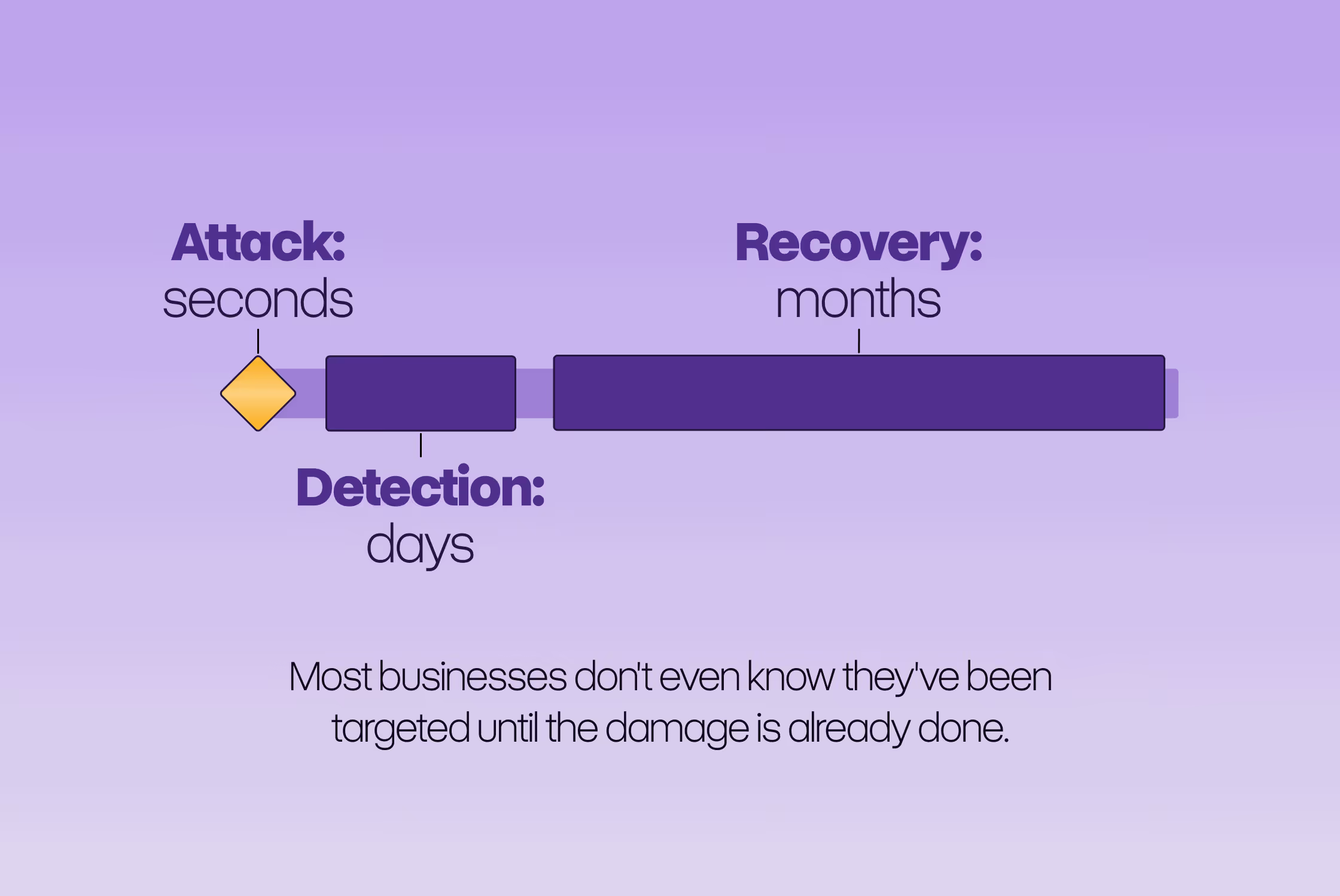

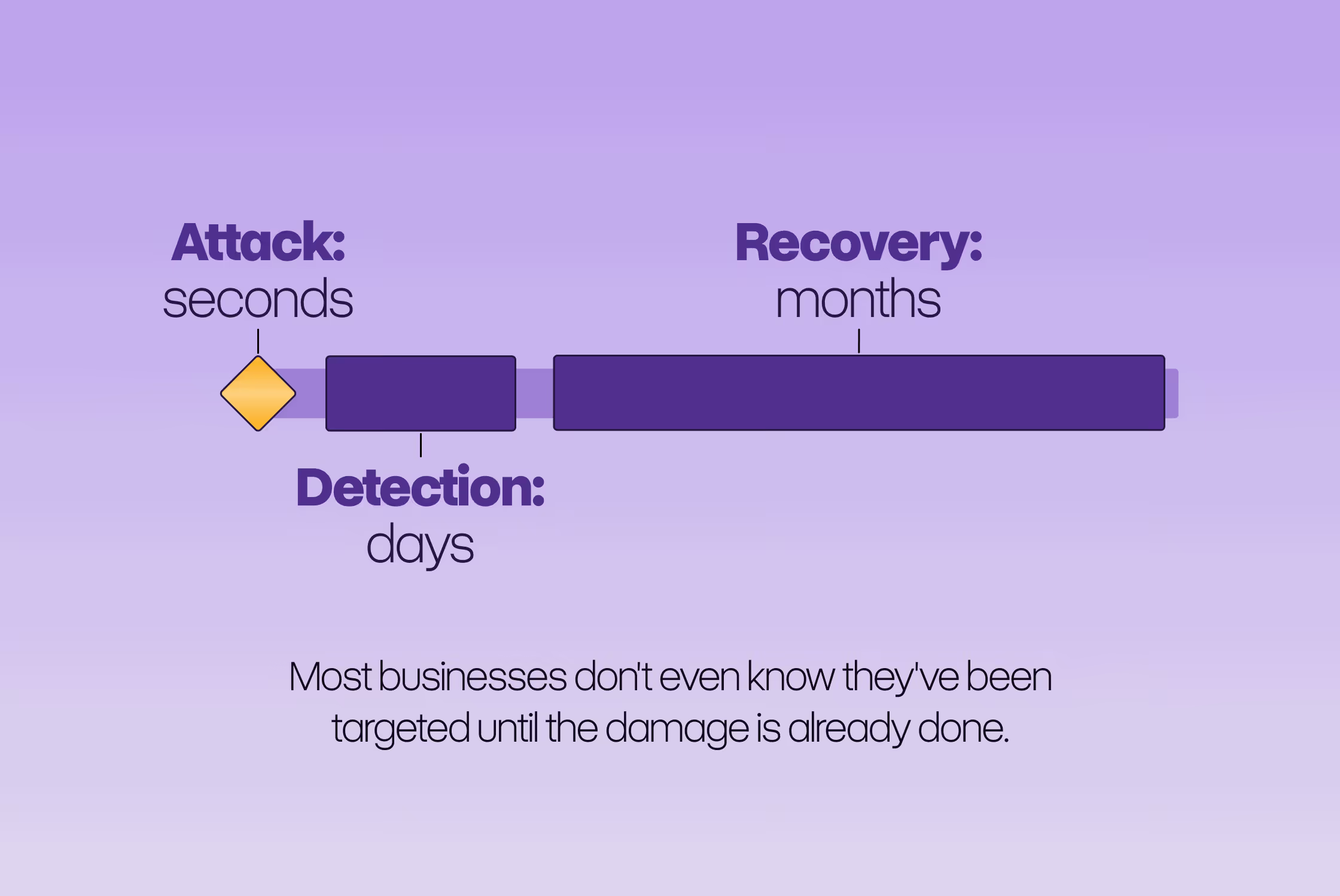

The thing that makes deepfakes so difficult to defend against isn't the technology itself, but the timing gap.

Creating a convincing fake: seconds to minutes.

Detecting that a fake exists: hours to days.

Recovering your brand's reputation: months.

That gap has nothing to do with technology, and everything to do with communications. And it's a gap that traditional crisis plans weren't built for, because those frameworks assume you know a crisis is happening. With deepfakes, you find out last - after customers, journalists, and your own employees have already seen it and formed an opinion.

The other thing traditional crisis thinking gets wrong here is the correction assumption. We tend to believe that if we can prove something is false, we can undo the damage. The research on misinformation tells a different story.

People remember the headline. Not the correction.

The initial story creates a mental image that a subsequent denial, however well-crafted, rarely fully dislodges. This isn't a failure of intelligence - it's just how memory and narrative work. A good deepfake gives people a vivid, specific, emotional experience. Your correction gives them a press statement.

This is what makes preparation so much more valuable than response. Not because you can prevent a fake from being made, but because a team that has thought through the scenario in advance moves faster, makes better decisions under pressure, and wastes less time figuring out who's supposed to do what.

What you can actually do (without a big budget)

None of this requires enterprise software or a dedicated security team. But it does require doing these things before you need them, not during.

Verification protocol for high-stakes requests

Any wire transfer, sensitive decision, or urgent action that arrives via call, voicemail, or video should be verified through a second channel - using a contact you already have saved, not one provided in the message. This sounds almost too obvious, but it's the single most effective defence against voice-cloning fraud, and most companies haven't formalized it. The reason it works is that it breaks the attacker's control over the communication channel. They can clone a voice; they can't intercept an outbound call you make to a number in your address book.

A code word system with leadership

Pick a phrase. Something random and easy to ask for naturally in conversation. Share it only with the people who might ever need to authorize sensitive decisions. Anyone making a high-stakes request should be able to confirm it - and if they can't, that alone is enough to pause and verify. Rotate it every 90 days. The reason this works is that it's shared in person or through a pre-existing secure channel, and it requires real-time knowledge that a cloned voice call can't provide.

Know what a fake looks like - and know the limits of that knowledge

The most common visual tells - unnatural blinking, blurry hairlines, lips slightly out of sync, backgrounds that flicker - are real, and worth knowing. But they're also becoming less reliable as the technology improves. The more durable skill is developing a general suspicion of urgency. Deepfake-based fraud almost always involves manufactured time pressure: "I need this done now," "don't loop in anyone else," "this is sensitive." That urgency isn't incidental - it's the mechanism. It's designed to bypass the part of your brain that would otherwise pause and ask questions. If something feels rushed in a way that's out of character, that feeling is worth acting on regardless of whether you can spot the technical flaws in the video.

If you do want to verify a specific piece of video or audio, free tools like Hive Moderation exist and are a reasonable starting point - but treat them as one data point, not a definitive verdict.

Set up monitoring before you need it

Create a Google Alert for your CEO's name paired with the word "video." Set up alerts for your company name paired with terms like "statement," "announcement," or "leak." Check quarterly for lookalike domains - variations of your company name that someone could register to impersonate you (something like ‘yourcompany-official.com’, ‘yourcompany-news.com’, and so on). None of this takes a long time to set up. This way, you can not only detect early, but document. If a situation escalates, having a timestamped record of when you first identified something and what you did about it matters enormously, both internally and externally.

Decide now who speaks, where, and how fast

If a deepfake went viral tomorrow morning, who in your organization makes the call on the public response? Which platform do you respond on first? What's the maximum time between awareness and first public statement? These are not questions you want to be answering for the first time at 8am on a Tuesday when your inbox is filling up with panic. Write down the answers - even informally, even in a shared doc - and make sure the relevant people know they exist. You don’t need a perfect plan. You just shouldn’t be the team that spends the first two hours of a crisis figuring out who's supposed to be doing something.

How I feel about all of this

The tools that make deepfakes possible are the same tools that are genuinely changing how content gets made. Voice cloning, AI avatars, synthetic video, they have real creative value. That duality is uncomfortable, but it's real, and pretending otherwise doesn't help anyone prepare for the actual risk.

What separates the brands that come through a deepfake incident intact from the ones that don't isn't budget or technology. It's whether someone, at some point before the crisis, sat down and thought: what would we actually do?

The best time to prepare was yesterday.

The second best time is now.

The worst time? Right after it happens to you.

You've got a crisis communications plan. You've got brand guidelines. You've got a social media policy that took three rounds of edits to finalize.

But here's a question worth sitting with for a moment: what happens when a video of your CEO surfaces announcing a layoff that never happened? Or when your CFO gets a phone call that sounds exactly like your Managing Director asking for an urgent wire transfer?

Most marketing managers don't have an answer to that. Not yet.

The threat that's already here

When people talk about deepfakes, they tend to picture something cinematic: a manipulated political speech, a celebrity scandal, a viral hoax. Something that happens to someone else. Someone famous. Someone with a PR team on speed dial.

But the threat that's actually heading toward mid-size businesses looks a lot less dramatic and a lot more targeted.

Voice cloning is the one keeping fraud prevention teams up at night. It takes just a few minutes of publicly available audio to replicate someone's tone, cadence, and speech patterns with unnerving accuracy. A conference talk on YouTube. A podcast appearance. A LinkedIn Live. Most senior leaders at growing companies have already put enough of themselves online that the raw material is sitting there, ready to be used. The resulting fake call doesn't need to fool a security expert. It just needs to fool one person on your finance team, under time pressure, on a Thursday afternoon.

The reputational version operates differently but the damage mechanism is the same: speed. A fabricated video of your leadership announcing a scandal, a bankruptcy, or a discriminatory statement doesn't need to be technically perfect. It needs to be believable for long enough to spread. A few hundred shares before anyone official responds is enough to embed the story in people's memories. You can issue a correction. You can prove it's fake. But you cannot un-ring that bell.

There's a reason this matters specifically to marketing and communications teams: you are the function responsible for what the brand says, where it says it, and how fast it responds when something goes wrong. IT can patch a vulnerability. Legal can draft a letter. But the moment a fake video of your CEO lands on social media, the clock is ticking on your desk.

The trust problem

The thing that makes deepfakes so difficult to defend against isn't the technology itself, but the timing gap.

Creating a convincing fake: seconds to minutes.

Detecting that a fake exists: hours to days.

Recovering your brand's reputation: months.

That gap has nothing to do with technology, and everything to do with communications. And it's a gap that traditional crisis plans weren't built for, because those frameworks assume you know a crisis is happening. With deepfakes, you find out last - after customers, journalists, and your own employees have already seen it and formed an opinion.

The other thing traditional crisis thinking gets wrong here is the correction assumption. We tend to believe that if we can prove something is false, we can undo the damage. The research on misinformation tells a different story.

People remember the headline. Not the correction.

The initial story creates a mental image that a subsequent denial, however well-crafted, rarely fully dislodges. This isn't a failure of intelligence - it's just how memory and narrative work. A good deepfake gives people a vivid, specific, emotional experience. Your correction gives them a press statement.

This is what makes preparation so much more valuable than response. Not because you can prevent a fake from being made, but because a team that has thought through the scenario in advance moves faster, makes better decisions under pressure, and wastes less time figuring out who's supposed to do what.

What you can actually do (without a big budget)

None of this requires enterprise software or a dedicated security team. But it does require doing these things before you need them, not during.

Verification protocol for high-stakes requests

Any wire transfer, sensitive decision, or urgent action that arrives via call, voicemail, or video should be verified through a second channel - using a contact you already have saved, not one provided in the message. This sounds almost too obvious, but it's the single most effective defence against voice-cloning fraud, and most companies haven't formalized it. The reason it works is that it breaks the attacker's control over the communication channel. They can clone a voice; they can't intercept an outbound call you make to a number in your address book.

A code word system with leadership

Pick a phrase. Something random and easy to ask for naturally in conversation. Share it only with the people who might ever need to authorize sensitive decisions. Anyone making a high-stakes request should be able to confirm it - and if they can't, that alone is enough to pause and verify. Rotate it every 90 days. The reason this works is that it's shared in person or through a pre-existing secure channel, and it requires real-time knowledge that a cloned voice call can't provide.

Know what a fake looks like - and know the limits of that knowledge

The most common visual tells - unnatural blinking, blurry hairlines, lips slightly out of sync, backgrounds that flicker - are real, and worth knowing. But they're also becoming less reliable as the technology improves. The more durable skill is developing a general suspicion of urgency. Deepfake-based fraud almost always involves manufactured time pressure: "I need this done now," "don't loop in anyone else," "this is sensitive." That urgency isn't incidental - it's the mechanism. It's designed to bypass the part of your brain that would otherwise pause and ask questions. If something feels rushed in a way that's out of character, that feeling is worth acting on regardless of whether you can spot the technical flaws in the video.

If you do want to verify a specific piece of video or audio, free tools like Hive Moderation exist and are a reasonable starting point - but treat them as one data point, not a definitive verdict.

Set up monitoring before you need it

Create a Google Alert for your CEO's name paired with the word "video." Set up alerts for your company name paired with terms like "statement," "announcement," or "leak." Check quarterly for lookalike domains - variations of your company name that someone could register to impersonate you (something like ‘yourcompany-official.com’, ‘yourcompany-news.com’, and so on). None of this takes a long time to set up. This way, you can not only detect early, but document. If a situation escalates, having a timestamped record of when you first identified something and what you did about it matters enormously, both internally and externally.

Decide now who speaks, where, and how fast

If a deepfake went viral tomorrow morning, who in your organization makes the call on the public response? Which platform do you respond on first? What's the maximum time between awareness and first public statement? These are not questions you want to be answering for the first time at 8am on a Tuesday when your inbox is filling up with panic. Write down the answers - even informally, even in a shared doc - and make sure the relevant people know they exist. You don’t need a perfect plan. You just shouldn’t be the team that spends the first two hours of a crisis figuring out who's supposed to be doing something.

How I feel about all of this

The tools that make deepfakes possible are the same tools that are genuinely changing how content gets made. Voice cloning, AI avatars, synthetic video, they have real creative value. That duality is uncomfortable, but it's real, and pretending otherwise doesn't help anyone prepare for the actual risk.

What separates the brands that come through a deepfake incident intact from the ones that don't isn't budget or technology. It's whether someone, at some point before the crisis, sat down and thought: what would we actually do?

The best time to prepare was yesterday.

The second best time is now.

The worst time? Right after it happens to you.